Artificial Intelligence, Interactive Measurements, and Assemblage Theory

Fuck Heidegger.

What follows is (very, very loosely) based on the talk I gave at the Cultural AI conference at NYU two weeks ago. I would like to take this opportunity to thank Leif Weatherby and Tyler Shoemaker for organizing this unique gathering and for inviting me to share my perspective.

Last night, Ben Recht challenged me to come up with a “grand synthesis” of what we have been hearing here so far. I don’t know whether this qualifies, but I think I can at least articulate some common themes and suggest a useful conceptual framework for this emerging field of “cultural AI” building on some ideas taken from cybernetics, structuralism, assemblage theory of Manuel DeLanda, and process philosophy of Alfred North Whitehead.

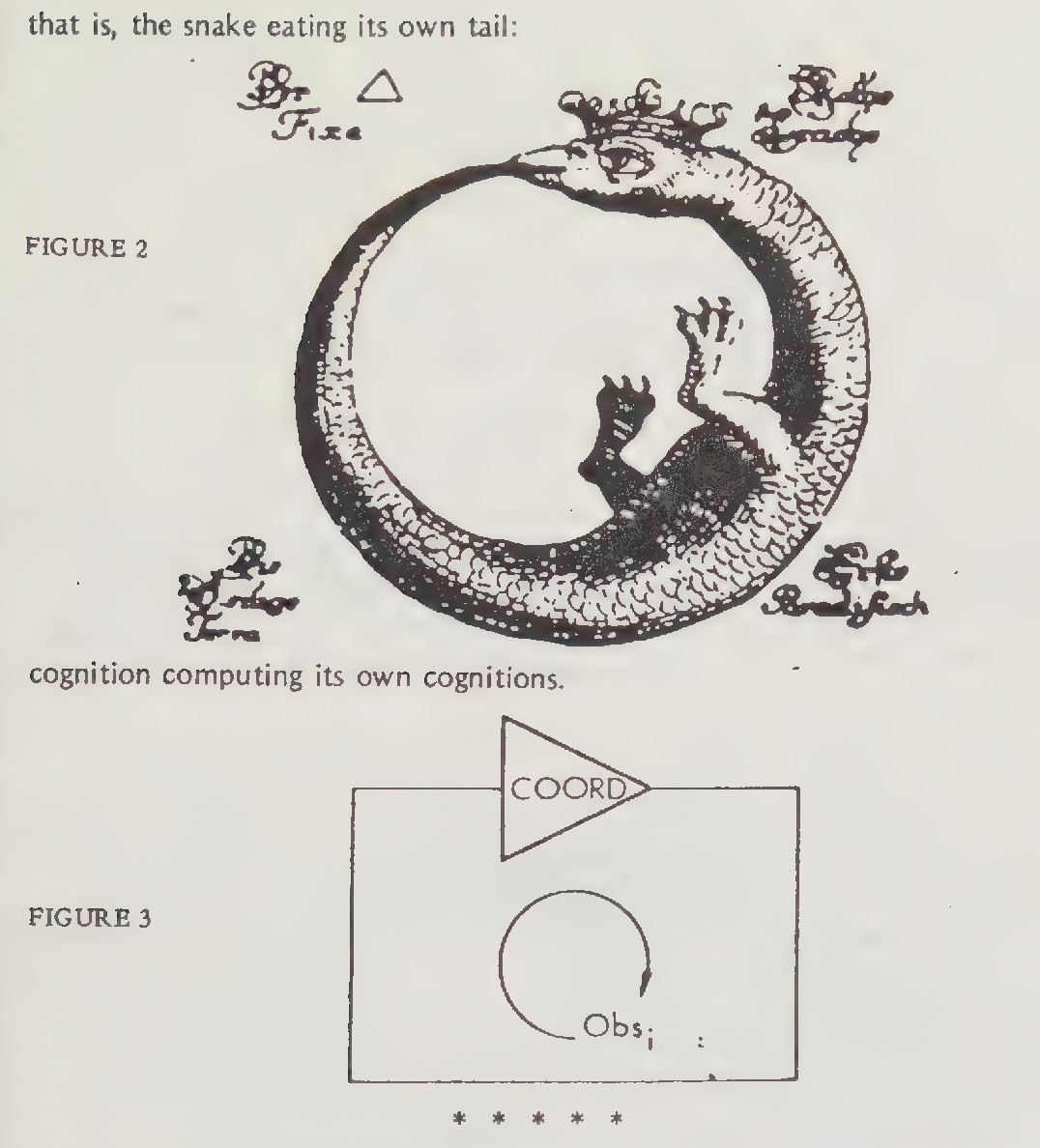

One of the goals of this meeting, namely to draw the outlines of an emerging field of “cultural AI,” runs up at once into the question of definitions and scope. What do we mean by culture, what do we mean by AI, and what do all the complementary viewpoints we have heard this week—literary theory, sociology, anthropology, computer science, machine learning, digital humanities, critical theory—say about the nexus of AI and culture? If we were to adopt the structuralist perspective to conceptualize AI as a cultural phenomenon,1 then it would make sense to project both culture and AI onto the three constitutive dimensions of structuralist view of systems, namely wholeness, transformation, and self-regulation.2 That is, we would view both “culture” and “AI” as closed, autonomous entities that are characterized by some set of invariants under a given collection of transformations. This decidedly cybernetic image is how, for example, structuralist linguistics has been viewing language or how structuralist anthropology has been viewing networks of social relations in human societies. What we would see from the outside is something like M.C. Escher’s “Drawing hands,” a pair of hands drawing each other into existence, co-creating each other. Second-order cybeneticists like Heinz von Foerster would invoke the image of the ouroboros and talk about “cognition computing its own cognitions,” “eigenbehaviors,” and so on:

As a professor of engineering who works on control and systems theory, I must admit that I like this metaphor a lot. It captures a great deal of how we think about feedback, regulation, control, things of this sort. However, I want to emphasize that, to an external observer, feedback control creates an illusion of closure and autonomy. It takes effort to create and maintain feedback systems, and we also need to be cognizant of historical and other factors that explain how these systems come and stay together and, just as importantly, how they eventually come apart. Both cybernetics and structuralism, by and large, elide these aspects of control systems (what James Beniger called “being” and “becoming”) and focus exclusively on what systems look like from the outside when they function as intended. If we adopt a systems view of culture and AI, we need to supplement the structuralist, closed-systems lens with the open-systems view nicely expressed by William James:3

Pluralistic empiricism knows that everything is in an environment, a surrounding world of other things, and that if you leave it to work there it will inevitably meet with friction and opposition from its neighbors. Its rivals and enemies will destroy it unless it can buy them off by compromising some part of its original pretensions.

William James often gets into trouble for these commercial metaphors (“buying off” here or “cash value” elsewhere), but they are valuable precisely because they highlight the role of friction, constraints, and trade-offs in complex systems. Put enough feedback control loops together, and they can create an illusion of an organism, a seamless whole composed of parts none of which can be conceived in isolation from others. Each component is, in fact, synonymous with, defined by, the totality of its relations to other components.4 Manuel DeLanda, to whose ideas I will come back in a moment, refers to these as relations of interiority.

This is one of the pitfalls of structuralism—it can tempt us into operating with reified generalities like “the society,” “the market,” or, closer to the theme of this meeting, “the culture” or “the artificial intelligence.”5 The key proposal I would like to put forward here, and thus to offer a synthesis of some of what we have heard earlier this week, is that we should adopt an alternative theoretical stance. If we want to understand complex systems under the rubric of cultural AI, then we should model them as assemblages, namely as wholes composed of relatively autonomous interacting parts characterized by relations of exteriority. These concepts, originating in the thought of Gilles Deleuze, have been developed into a comprehensive theoretical framework by Manuel DeLanda6 and, as it turns out, map pretty neatly onto how control and systems theorists reason about complex systems—in particular, how these systems are (or can be) constructed and how the patterns of interconnections between system components, both material and symbolic, give rise to the observed behavior of systems in interaction with their environments.

According to DeLanda, relations of exteriority

imply, first of all, that a component part of an assemblage may be detached from it and plugged into a different assemblage in which its interactions are different. In other words, the exteriority of relations implies a certain autonomy for the terms they relate … . Relations of exteriority also imply that the properties of the component parts can never explain the relations which constitute a whole, that is, ‘relations do not have as their causes the properties of the [component parts] between which they are established.’

On DeLanda’s account, autonomy refers to each part’s capacity to affect and to be affected by others. This is, obviously, context-dependent because the specific way in which the parts are interconnected and how they interact will select which of the capacities will be exercised and which ones will not be. Assemblage theory is the study of such wholes constituted by relations of exteriority and of the historical processes producing, stabilizing, and destabilizing them.

What exactly are the components that make up assemblages? Following DeLanda, we can speak of material and expressive components. Material components can be persons, equipment, data centers, physical infrastructure. Expressive components are datasets, code, model weights, rules, norms, laws, regulations, expectations of roles, organizational structures. This is not a fixed characteristic, but more of a context-dependent role, and each component can occupy a variable position on the axis between purely material and purely expressive (cash value, anyone?). The other dimension has to do with processes that either stabilize the assemblage or destabilize it; DeLanda, following Deleuze, calls these opposite tendencies territorialization and deterritorialization. These define the boundaries of an assemblage; they can make them sharper, bring the components together, or they can work in the opposite way.

Provisionally, then we can put forward the following points towards an “assemblage theory of AI systems:”

AI systems must be understood through interaction between datasets and users

datasets, users, interfaces etc. form assemblages

interaction is mutual measurement: coding/decoding

the ongoing process of measurement is a process of change: territorialization/deterritorialization

who decides what to measure? what to do with those measurements?

The first two bullet points are self-explanatory, they simply establish the overall conceptual framing. The next point introduces the idea of measurement and of the related concepts of coding and decoding. I have discussed the measurement-centered view of machine learning elsewhere; here I want to frame it in the context of assemblage theory by appealing to a useful distinction Herbert Simon made between two types of descriptions of the world—namely, process vs. data:7

Pictures, blueprints, most diagrams, and chemical structural formulas are state descriptions. Recipes, differential equations, and equations for chemical reactions are process descriptions. The former characterize the world as sensed; they provide the criteria for identifying objects, often by modeling the objects themselves. The latter characterize the world as acted upon; they provide the means for producing or generating objects having the desired characteristics.

From this perspective, the basic epistemological claim underlying machine learning is that, to a large extent, it is possible to automate the extraction of process descriptions from data descriptions. In this sense, borrowing an apt formulation from Ben Recht, machine learning is engineered induction. Coding is the act of compressing data descriptions into process descriptions. However, in the spirit of Escher’s two hands drawing each other, there is a dialectic relating data and process, the flow from data to process is co-extensive with the flow from process to data. Decoding is the opposite act of generating data from process descriptions. This is, of course, the ethos of generative AI, but it is also the feedback loop that produces and reproduces culture. Novelty, creativity, spontaneous order can arise here because process descriptions encapsulated in AI models are by themselves inert, they need an external stimulus or prompt in order for generation to take place.

This is what we heard in Henry Farrell’s talk on the nexus of social, cultural, and bureaucratic technologies and from Cosma Shalizi on generative AI as mechanized tradition. Traditions are process descriptions of lore; when Cosma quotes Jacques Barzun about intelligence and intellect, he is also referring to the data-process dialectic. And this is where the question of values comes in (although, in a sense, it never really went anywhere—it was right there in William James’ quote about friction and opposition and buying off). As the components making up the cultural AI assemblages exercise their capacities to affect (or measure) and to be affected (or to be measured) by one another, we have to ask ourselves who decides what to measure and what to do with these measurements. These are the “hidden governance” aspects of AI that were highlighted by Abbie Jacobs in her talk, and they must be treated on the same footing as the questions of rationality, optimization, and other things that engineers care about.

And, indeed, humanistically minded engineers have been emphasizing these issues and raising related concerns all along.8 For example, this is how Sanjoy Mitter introduces the question of system effectiveness:9

System effectiveness is intimately tied to the issue of structure, the problem of measurement and the question of resources and values on the basis of which the system is evaluated for effectiveness … in a somewhat broad context where the systems can include both technological as well as social and economic systems. In order for systems to be effective, they have to be coherent … . The word coherence is being used here in the sense of Whitehead and is a concept which is broader than logical consistency. It requires viewing the system as a ‘whole’ which always has an environment and a value system (internal). Besides, the system residing in its environment is capable of observation by a multitude of external observers, each observer possessing its own value system.

This, once again, takes us back to William James’ pluralist empiricism, and it is very fitting to mention Whitehead here. His process ontology is in many ways similar to DeLanda’s assemblage theory because it also emphasizes exteriority of relations and the open systems view. Whitehead’s notion of coherence (which I have discussed elsewhere) is, as Steven Shaviro puts it10, “not logical, but ecological. It is exemplified by the way that a living organism requires an environment or milieu—which is itself composed, in large part, of other living organisms similarly requiring their own environments or milieus.” In addition to the dimensions ordinarily associated with instrumental rationality, it brings both ethics and aesthetics to bear on the problem of system design, instantiation, and maintenance. Moreover, emphasizing coherence and not just logical consistency prompts us to question various reified generalities that are constantly proffered by various actors as ultimately dispositive, such as the Silicon Valley framing of language as intelligence and of intelligence as a service. Shaviro puts it very nicely:11

We cannot live without abstractions; they alone make thought and action possible. We only get into trouble when we extend these abstractions beyond their limits . … This is what Whitehead calls ‘the fallacy of misplaced concreteness,’ and it’s one to which modern science and technology have been especially prone. But all our other abstractions—notably including the abstraction we call language—need to be approached in the same spirit of caution.

I would like to close by saying that I emphatically reject the original sin view of technology that pervades both the “AI ethics” and the “AI safety” camps. Ironically, it exposes their most dogmatic adherents as Heideggerian reactionaries who view technology as a revelation of an antihuman totalizing world order, which, depending on the camp you belong to, is either a colonialist profit maximizer or a superintelligent paperclip maximizer. Like Heidegger, they are obsessed with origins and are utterly uninterested in technology’s positive potential to realize what Whitehead called “creative advance into novelty.” As Shaviro argues, we would do better if we adopted Whitehead’s view instead:12

Whitehead’s reservations about science run entirely parallel to his reservations about language. (By rights, Heidegger ought to treat science and technology in the same way that he treats language: for language itself is a technology, and the essence of what is human involves technology in just the same way as it does language).

In summary, it seems to me that assemblage theory can offer a compelling conceptual framework for the emerging field of “cultural AI,” bringing together the complementary perspectives of technologically minded humanists and humanistically minded technologists. Thank you!

Leif Weatherby, Language Machines: Cultural AI and the End of Remainder Humanism, 2025.

See, for example, Jean Piaget, Structuralism, 1968.

William James, A Pluralistic Universe, 1909.

This is reminiscent in some ways of category theory, where the main role is played not by the objects of a category but by the web of relations (or morphisms) connecting the objects. This is why many authors like to bring up category theory in the context of structuralism. However, category theory offers many ways of transcending the seemingly fixed nature of objects as determined by their relation to other objects—for example, using various notions of duality, where the objects of a category become morphisms of another category and vice versa.

This is why Piaget argues that it is important to complement structural analysis with a dynamical account that would explain how a given structure came to be.

Manuel DeLanda, A New Philosophy of Society: Assemblage Theory and Social Complexity, 2006.

Herbert A. Simon, The Sciences of the Artificial, 1981. Simon uses “state description,” but to a control theorist “state” has a very definite meaning, so I will follow Alistair McFarlane and use “data description” instead.

Norbert Wiener, The Human Use of Human Beings: Cybernetics and Society, 1950.

Sanjoy K. Mitter, “On system effectiveness,” 2002.

Steven Shaviro, Without Criteria: Kant, Whitehead, Deleuze, and Aesthetics, 2009.

Shaviro, ibid.

Once more, with feeling: fuck Heidegger!

This was really interesting and so rich to read—I'm glad that a version of the conference talk made its way to your newsletter! A few ideas here that felt particularly compelling (and that I'm now very excited to repurpose and try out elsewhere)…

1. Your note that modeling the world in terms of feedback systems 'creates an illusion of closure and autonomy,' and perhaps fails to recognize all the external social/historical/economic events that eventually impinge upon systems and reshape them—the 'relations of exteriority' that DeLanda describes.

2. The bulleted list of what AI systems are composed of, if we treat them as these assemblages that relate to the outer world in constantly-shifting ways. I also love the framing of interaction as 'mutual measurement', and must think about this framing more! And the whole discussion of process and data as constantly reshaping each other…like you might have a process that requires the data to be in a particular shape (so it can be operated on in a certain way); and then you might discover some trait of the data that demands your process be re-implemented or re-understood in order to effectively handle that trait and use it…

3. The really amazing Steven Shaviro quote you included (I hadn't heard of him before, but love this framing): 'We cannot live without abstractions; they alone make thought and action possible. We only get into trouble when we extend these abstractions beyond their limits…it’s [a fallacy] to which modern science and technology have been especially prone. But all our other abstractions—notably including the abstraction we call language—need to be approached in the same spirit of caution.

4. The great and very apt characterization of AI ethics/safety as operating off an 'original sin view of technology.'

Thank you for sharing this!!

"(By rights, Heidegger ought to treat science and technology in the same way that he treats language: for language itself is a technology, and the essence of what is human involves technology in just the same way as it does language)." More than an echo of Uncle Ludwig here.